Journal of Mental Health and Social Behaviour Volume 1 (2019), Article ID: JMHSB-102

https://doi.org/10.33790/jmhsb1100102Research Article

An Increasing Bilateral Advantage in Chinese Reading

Yuju Chou1*, Richard Shillcock2

1*Department of Counseling and Clinical Psychology, National Dong Hwa University. No. 1, Sec. 2, Dashieh Road, Shofeng, Hualien, 974, Taiwan.

2 School of Informatics, University of Edinburgh, 10 Crichton Street, Edinburgh, EH8 9AB, United Kingdom.

Corresponding Author Details: Yuju Chou, Department of Counseling and Clinical Psychology, National Dong Hwa University. No. 1, Sec. 2, Dashieh Road, Shofeng, Hualien, 974, Taiwan. E-mail: yjchou@gms.ndhu.edu.tw

Received date: 28th February, 2019

Accepted date: 04th April, 2019

Published date: 24th May, 2019

Citation: Chou, Y., & Shillcock, R. (2019). An Increasing Bilateral Advantage in Chinese Reading. J Ment Health Soc Behav 1: 102.

Copyright: ©2019, This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited

Abstract

Researchers have shown a bilateral advantage for English words presented simultaneously and bilaterally in the two visual hemifields. Given that hemispheric lateralisation in Chinese does not appear as robust or extreme as in English, the authors examined bilateral advantage with Chinese characters and tested hypotheses regarding task complexity, Gestalt perception, redundancy gain, and foveal splitting. The results show a tendency towards bilateral advantage with increased word length, supporting the hypothesis that only if the complexity of a task increases to a critical level will the two hemispheres collaborate to complete the task.

Keywords: Bilateral advantage, Chinese character, Word length effect, Split fovea model, Hemispheric lateralization, Gestalt perception

Introduction

The bilateral effect is generally defined in two ways [1]. One is the overall processing advantage that occurs when the same stimulus is presented simultaneously to both visual hemifields. This effect is also referred to as the difference between within-hemisphere and across-hemisphere processing, as defined by Banich [2]. The second definition is an increase in the field difference, or asymmetry, found when different stimuli are presented to the two fields as compared to a single stimulus in only one field. For example, Iacoboni et al. [3] presented two different items in the two visual fields, using an arrow to denote the target word and the distracter. The present experiment is based on the first definition.

Many hypotheses have been proposed to explain the bilateral effect. Boles [1] divided these hypotheses into two broad classes: hemispheric interaction hypotheses and non-interaction hypotheses. According to the hemispheric interaction hypotheses, the effect is due to an interaction between the hemispheres, either inhibitory or facilitatory, depending on the hypothesis. According to the noninteraction hypotheses, the bilateral effect is an artefact of how bilateral displays are processed. Both classes of hypotheses are concerned with interhemispheric transfer, integration, and cooperation.

Metacontrol studies in English

Mohr et al. [4] reported an advantage for function words over content words when the words were presented in the right visual field (RVF), but the reverse when the words were presented in the left visual field (LVF). They concluded that each hemisphere seems to be equipped with its own version of the lexicon. Thus, when two identical items are displayed simultaneously, one should expect interhemispheric inhibition, independence, cooperation, or a complex pattern of inhibition and excitation. Prior studies have provided evidence for so-called “metacontrol” by one hemisphere in certain linguistic and visual tasks. For example, Hellige et al. [5] found in a letter-comparison task that the performance pattern under bilateral presentation was similar to that found with RVF presentation, suggesting that the left hemisphere (LH) is dominant in this task. An experiment by Hellige, Taylor et al. [6] showed that, with bilateral presentation, recognition of consonant syllables was similar to that of the right hemisphere (RH). These data suggest that the RH dominates the LH in this task. On the other hand, there is a general tendency for bilateral presentation to improve performance compared with unilateral presentation, creating a bilateral advantage or “superadditive” effect.

Languages differ in the degree to which visual information matches specific phonological representations. In Spanish, with its transparent orthography, letter patterns and their corresponding pronunciations are in almost one-to-one correspondence [7]. Effectively, it is possible to pronounce written Spanish words correctly without understanding their meanings. In contrast, pronouncing Chinese characters requires stored representations of each character, although most of the characters contain a phonetic radical which provides a cue, of varying reliability, to the phonological representation of the whole character.

In a series of studies, Zhang et al. [8] investigated bilateral advantage using Chinese characters. They found that the occurrence of the bilateral effect depended on the attributes of the Chinese characters that the readers had to process. If the task was to match two characters that were homophones or synonyms, presenting those characters bilaterally and simultaneously produced better performance than presenting them unilaterally. Participants were asked to press a “yes” button with their index finger when they saw that two of the three simultaneously presented characters matched each other in orthography, phonology, or semantics; they were asked to press a “no” button when there was no such match. Matching pairs were presented either unilaterally (within one visual hemifield, with the third character presented in the other visual hemifield as a distracter) or bilaterally (across both hemifields, with the third character presented in one visual field as a distracter). In other words, on every trial three characters were presented, two of which might match. However, this bilateral advantage did not occur when visually similar characters were matched. Zhang et al. [8, 9] concluded that processing semantic and phonological attributes is more difficult than processing orthographic attributes. Their results seem to reflect the computational complexity of the task: it is advantageous in more complex tasks to have the two hemispheres collaborating rather than to rely on just one. In the next section, we discuss the interaction of task complexity and hemispheric interaction.

Task complexity

As noted by Mechelli et al. [10], increasing word length increases demands on the processing of both local features and global shapes. Compared with reading alphabetic languages, reading Chinese is a complex task, because the pronunciation of a character is not always identical to that of its phonetic radical. In many cases, the pronunciation of a particular character changes. About 10% of the most frequently used Chinese characters have more than one pronunciation. These pronunciation changes affect meaning, and the ambiguity must be resolved by referring to the previous or subsequent character. For example, in the Chinese word ‘ ’ (length), the first character ' ' is pronounced ‘chang2’, whereas the same character in the second position of the Chinese word ‘ ’ (teacher) it is pronounced ‘zhang3’. Thus, with parafoveal vision, reading Chinese requires global processing of both the character itself and its context in parafoveal vision.

Evidence obtained from split-brain patients supports the suggestion that interhemispheric processing aids task performance under high load conditions. Apparently, the difficulty of the task interacts with the strategy of hemispheric processing. As the difficulty of a task increases, patients who are unable to transfer information via the cortical commissures exhibit the greater decrement in performance [2]. If the task is lateralized to a single hemisphere, the performance of split-brain patients tends to be compromised less than that of normal participants. The poorer performance of split-brain patients implies that normal individuals decrease heavy processing loads by distributing them across the hemispheres.

In a series of studies with normal participants, Banich and colleagues [11-13] demonstrated greater bilateral distribution advantage (BDA) when participants were asked to solve relatively complex tasks (e.g., deciding whether two letters are pronounced the same way) than when they were asked to solve less demanding tasks (e.g., deciding whether two letters are visually similar). The authors ascribed the advantage to the division of labour between the two hemispheres. This division can also occur when the processing is divided over time, that is, if the comparison is made across successively presented stimuli. For example, Weissman et al. [13] showed that BDA disappeared with sequential presentation, in which case participants could make the relevant comparisons faster when materials were presented to just one hemisphere. Presenting simulations with a divided connectionist architecture, Monaghan et al. [14] found that “hemispheric” collaboration emerged spontaneously. This outcome supports the finding that bilateral collaboration enhances the performance of difficult tasks. Employing a connectionist model, they simulated shape-matching and name-matching tasks using divided computational resources to represent the two hemispheres. They found that (a) the shape-matching task was easier for the model to learn than the name-matching task, as more training was needed for the latter task, (b) performance was significantly better with bilateral than unilateral presentation in the name-matching task, with type of presentation making no significant difference for the difference for the shape-matching task, and (c) the bilateral advantage was a consequence of divided processing, with the reduction in interhemispheric resources increasing the training necessary to simulate the behaviour.

Zhang et al. [15] claimed that the processing of Chinese characters is related not only to the number of character strokes but also to the number of radicals in a single character. Chen et al. [16] suggested that radicals are the basic perceptual units of a character and that the stroke pattern (the radical) is more important for character recognition than the number of strokes. The basic unit of word/ character recognition is a topic of ongoing controversy in the study of visual word recognition. Pelli et al. [17,18] adopted a psychophysical approach to studying the recognition of letters and words in the presence of noise, comparing participants’ ability to recognize various alphabets, including Chinese. They demonstrated that these alphabets vary in complexity, with readers processing more complex languages less efficiently than less complex languages. Pelli et al. [18] defined complexity in terms of the perimeter of the letter/character squared divided by the “ink” area measured in pixels. Consistent with the reader’s intuitions, Chinese orthography was found to be more complex than English orthography. On the other hand, they defined efficiency with respect to the ideal observer. Pelli et al. reached the surprising conclusion that the brain’s processing of words, as assessed by their identification-in-noise paradigm, uses letter as the unit of analysis, despite the fact that the massive exposure to words over years of reading should allow a larger unit. Instead, they claimed that letters, as well as about 20 undefined visual features of subletters, are the units used for the independent processing. Although they put forward the notion that this dependence on feature processing creates a processing bottleneck early in the visual pathway, there are reasons to believe that this is not the whole picture. There are certain levels of processing where words are distinguished from orthographically legal nonwords. For instance, Cohen et al. [19] argued that the fusiform gyrus mediates the processing of whole words, labelling the region as the visual word form area (VWFA). One reason why Pelli et al. [17, 18] did not find processing of whole words at the relatively peripheral level addressed by their task may be that such entities are themselves distributed across V1 in the two hemispheres; it is at this level that the relevant processing must occur. In summary, Pelli et al. provided psychophysical support for a relatively small number of undefined visual features which must be independently processed in word recognition, but the level of processing they addressed was lower than that of the systematic relationships between words/ characters/radicals and phonology/ semantics. We suggest that the latter level is the important one for the interhemispheric processing of Chinese characters.

Gestalt perception

An interpretation of bilateral advantage with older historical roots attributes it to Gestalt perception resulting from the simultaneous presentation of two instances of the same item. Gestalt perception occurs when repeated objects have strong effects according to three Gestalt principles: similarity, proximity (or contiguity), and continuity [20]. The principle of similarity means that things which share the same visual characteristics, such as shape, size, color, texture, value, or orientation, are seen as belonging together. The principle of proximity or contiguity means that things which are closer together are seen as belonging together. For example, eight circles in a panel tend to be seen as four two-by-two groups when the horizontal rows of circles are close to one another. The principle of continuity means that continuous figures are preferred. For example, we may perceive a figure as a triangle overlapping three circles rather than three separated circles when every circle has a part missing.

According to the similarity principle, doubly presented items are usually seen as a single unit. In most cases, Gestalt perception is usually seen as relatively peripheral and perceptual, and it reflects a segmentation or “parsing” of the visual world. When applied to two words or characters repeated in the visual field, this perceptual processing leads to the words or characters being seen as related, but the extensive similarity and one-to-one mapping that one finds with repeated items seems to go beyond relatedness. The more detail that is repeated, the more scope there seems to be for a perceptually compelling matching of the two items; for instance, characters containing more strokes, and words containing more characters, appear to provide greater scope for such perceptual mapping. Next section will explicitly address the issue of repeated words in the visual field.

Redundancy gain

Mohr et al. [21] introduced the notions of ‘redundancy gain’ and ‘ignition priming’ to represent, respectively, the advantage of simultaneous presentation of two identical items and the advantage of unsynchronised presentations with an SOA of 150–180 msec. Compared with other SOAs, this range facilitate word recognition, thereby leading to higher accuracy and shorter response latency in lexical decision, regardless of the left-right positions of the stimuli. Based on the Hebbian cell-assembly framework [22], redundancy gain has been explained in functional terms as resulting from the double activation of the sensory cortices as neuronal summation devices; in other words, two versions of the same processing are simultaneously computed and then summed. Mohr et al. [21] explained ‘ignition priming’ as the synchrony of the onset of the second stimulus and the ignition of the neural representation of the first stimulus. However, ignition priming might simply be a case of ‘perceptual learning’, that is, things experienced more often are processed more easily. It is possible that the SOAs under which ignition priming was discovered correspond to the duration of saccadic eye movements in normal reading—the time needed for eye propagation and back propagation. Thus, the accelerating effect of 150–180 msec. SOA might simply reflect the fact that the eyes usually take 150–200 msec. to remap letters or words during sentence reading under normal circumstances.

The current study is similar to the first experiment of [21], in which they compared two copies of identical words presented to both the LVF and RVF with a single copy of a word presented unilaterally to the LVF or RVF. We hypothesized that in a similar paradigm the bilateral effect will be observed following the double presentation of Chinese characters, although the known differences between the reading of Chinese characters and alphabetic words make predictions difficult beyond the perceptual level. The long response latency of lexical decision required by long Chinese words may also influence the bilateral effect (cf. the exploration of different SOAs by ref. [21]).

A new model based on the split-fovea hypothesis

As noted above, the bilateral effect can be explained with reference to hemispheric lateralisation and fovea splitting. Here we propose a specific causal explanation of bilateral advantage based on fovea splitting, possible eye saccades, and the informational transfer of images from the initial bilateral or unilateral presentation of words to the visual cortex.

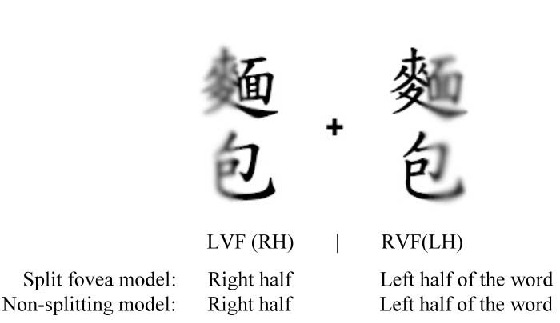

Bilateral presentation

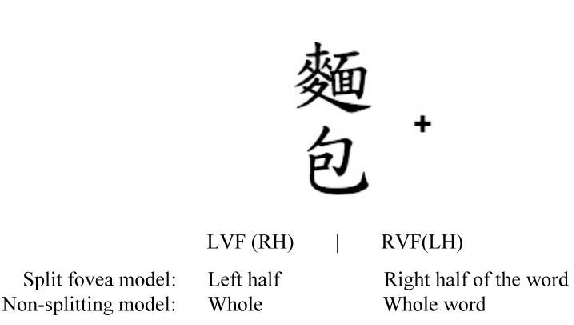

With bilateral presentation, the closer a stimulus is to the central fixation point, the clearer the image [23]. As illustrated in Figure 1, the initial eye fixation allows the information in the two visual fields to go to the two hemispheres. As a consequence, the right half of the stimulus in the LVF is transferred primarily to the RH, and the left half of the stimulus in the RVF is transferred primarily to the LH.

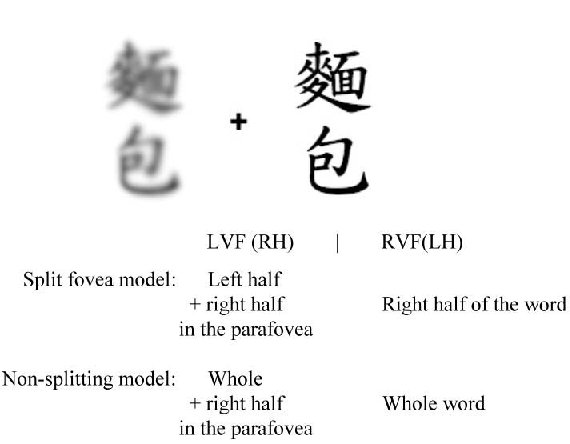

After an eye movement, word recognition typically proceeds with direct fixation on a single stimulus to the RVF or LVF, depending on which way fixation shifts. As shown in Figure 2, if a participant’s fixation shifts to the RVF after the initial bilateral fixation, the viewing of the item in the RVF changes to a direct fixation. The right half of the single stimulus is projected to the LH and the left half to the RH. When the eyes capture the image during the initial fixation, the parts of the image closest to the fixation point are the most accurate. Bilateral presentation allows the two hemispheres to produce a complete image of the characters. As a result, following two consecutive fixations, both the RH and the LH have a whole image of the stimulus. In short, bilateral presentation makes the half images complete.

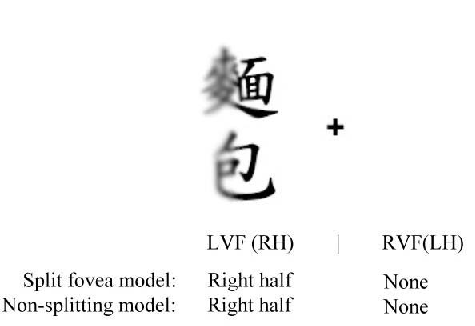

Unilateral presentation

The information captured from a unilateral presentation is not as complete as from a bilateral presentation. What happens during the initial eye fixation in a unilateral presentation is illustrated in Figure 3. When one fixates initially on the central point, only information about the right half of the stimulus in the LVF is projected to the RH.

After the eyes move to the stimulus, the latter becomes directly fixated, allowing information about the right half of the stimulus to be projected to the LH and information about the left half of stimulus to be projected to the RH, as shown in Figure 4. Eventually, the RH creates an image of the whole stimulus presented unilaterally in the LVF, but the LH captures only the right half of the stimulus. Likewise, when a stimulus is presented unilaterally to the RVF, the LH creates a complete image of the stimulus, but the RH captures only the left half of it. According to this model (which is based on the splitfovea hypothesis), there should be bilateral advantage for Chinese characters. Moreover, the typical division of these phonetically complex characters should accentuate the bilateral advantage compared to what is found with alphabetic languages.

Hypothesis of this study

There is a robust word-length effect in the reading of Chinese words which does not differ significantly between the hemispheres. Assuming that the absence of a length by hemisphere interaction in Chinese word recognition is due to the complex nature of the words themselves, such as the quantity of information they embody and the intensive structure of the characters, we expected to find bilateral advantage in the recognition of Chinese words, given that complexity seems to encourage emergence of the bilateral effect. Additionally, due to metacontrol and the physical eye-saccade model we have proposed, we believe there is a bilateral effect in Chinese. Furthermore, the vertical orientation of Chinese text means that Chinese words offer an opportunity for this effect to emerge. This opportunity does not exist in English, where the vertical orientation of words is atypical and unlikely to encourage normal reading behaviour; in contrast, vertical orientation in Chinese is normal.

In short, we used the bilateral paradigm to investigate bilateral advantage in Chinese words with multiple characters. First, though, we present our experiment and the results, in which we tested the specific hypothesis that the bilateral effect emerges in the normal reading of Chinese words of different lengths, controlling complexity and the number of characters in a word.

Method

Participants

Twenty-five Taiwanese undergraduate and postgraduate students (11 males and 14 females), 18 to 25 years old, participated in the experiment. All were native speakers of Mandarin and right-handed with normal or fully corrected vision.

Stimuli

The stimuli are presented in the Appendixes 1~3. There were three presentation conditions, right unilateral (see Figure 5A), left unilateral (see Figure 5B), and bilateral (bilateral visual field, BVF; see Figure 5C). With bilateral presentation, two identical stimuli were presented in the two visual hemifields simultaneously. In each condition there were 40 Chinese words, two, three, or five characters in length. The words were presented 1.5 visual degree from the fixation point. An arrow occupying 0.6–0.9 degrees of visual angle from the central fixation point was presented simultaneously with the Chinese stimuli. The purpose of the arrow was to direct participants to respond to a particular word [4].

Figure: 5 A: Unilateral presentation of 2-character words in the RVF. B: Unilateral presentation of 3-character words in the LVF. C: Bilateral and simultaneous presentation of 5- character words.

Procedures

First, the fixation point was presented for 1000 msec. It was followed by 2000 msec. of the stimulus and then 1000 msec. of a masking picture. Participants were instructed to make lexical decisions and respond by pressing critical buttons on the response box with their index finger. Pressing the rightmost button with the right index finger was required for real words, whereas pressing the leftmost button with the left index finger was required for nonwords.

Analysis

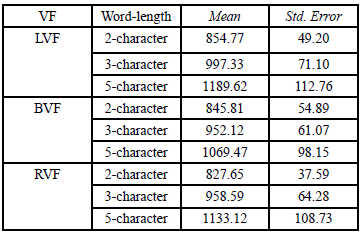

For the by-participant analysis, there were two within-participant variables: Visual Field (LVF, BVF and RVF) and Word length (two-, three- and five-character words). For the by-items analysis, Visual Field was a within-item variable and Word Length was a betweenitem variable. The dependent variable was response latencies of lexical decisions. All these analyses were performed with Repeated Measurements using SPSS software.

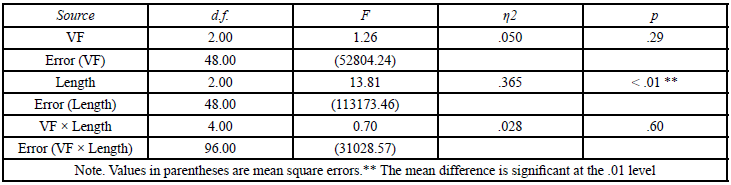

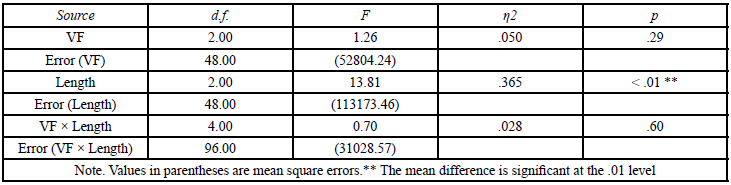

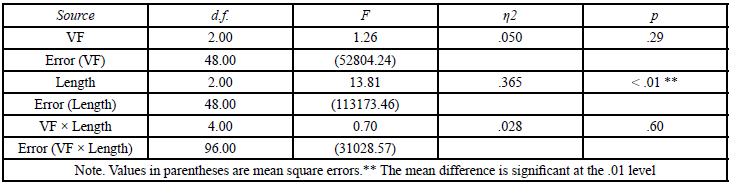

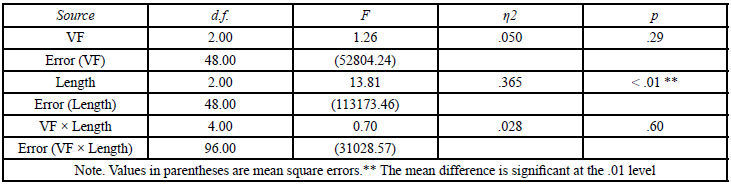

Results

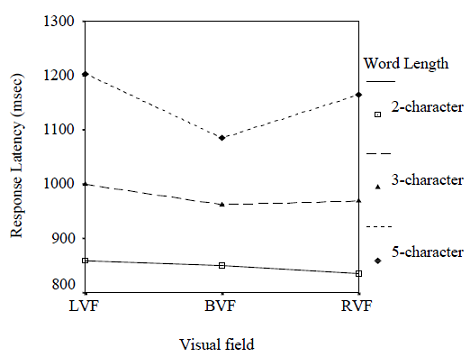

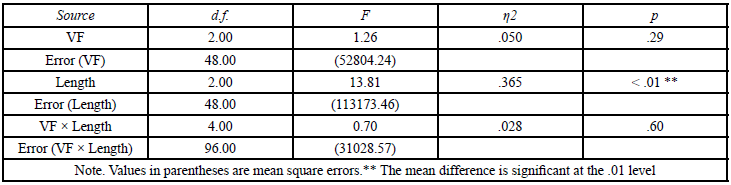

The results are presented in the following text and illustrated in Figure 6 and Tables 1 to 4. Visual inspection of Figure 6 reveals a markedly V-shaped top line, a slightly V-shaped middle line, and a flat bottom line. This pattern suggests that the bilateral effect became progressively stronger as word length increased. There was a significant main effect for Length with participant as the unit of analysis, FP (2, 48) = 13.81, p < .01, and with item as the unit of analysis, FI (2, 47) = 11.17, p < .01. The Visual Field main effect was insignificant, regardless of whether the unit of analysis was participant, FP (2, 48) = 1.26, p > .1, or item, FI (2, 48) = 0.69, p > .1. The interactions between Length and Visual Field were insignificant by participant, FP (4, 96) = .70, p > .1, and by item, FI (4, 96) = .02, p > .1.

Discussion

The results indicate a tendency toward a V-shaped bilateral advantage, although the effect was not strong enough to yield a significant Length by Visual Field interaction. In the following sections, we discuss four factors suggested by these results as potentially critical to elicitation of the bilateral effect in the processing of Chinese words. They are (a) the complexity of Chinese words, (b) foveal splitting and the initial presentation of bilateral stimuli, (c) the information obtained from parafoveal vision, and (d) what we will term the Gestalt effect in perception.

Summary of results : the V-shaped effect

There is a V-shaped relationship for the longest words across the three viewing conditions. Recognizing the shortest words, comprising two characters, was the easiest task, and the response latencies in all three presentation conditions were nearly equivalent, resulting in a flat line. When the word length was increased to three characters, word recognition in the three presentation conditions changed slightly, indicating a tendency towards an RVF advantage. The strongest component of the V-shaped effect was a deterioration in word recognition (increased latency) when the word length was increased to five characters. Bilateral presentation produced the fastest responses.

Metacontrol

The response latencies suggest that unilateral RVF performance was similar to BVF performance. This result is consistent with the theory of meta-control, in that BVF performance was almost equivalent to RVF performance, suggesting that the LH is dominant in this task. Again consistent with the theory, it seems that the LH exerted metacontrol, thus giving rise to similar reaction times in the BVF and RVF conditions, regardless of word length.

Increasing complexity of Chinese words

A clear tendency towards the bilateral effect was observed only for the longest words. The effect increased linearly as complexity increased. Mechelli et al. (2000) [10] found that increasing word length increased demand on the processing of both local features and global shapes. The complexity of words increases with an increase in word length. Studies of the characteristics of Chinese word recognition have shown that Chinese words are unique with respect to word/morphemic segmentation. To identify a word successfully and make a correct lexical decision, Chinese readers must parse the individual characters into possible combinations of morphemes, because Chinese has equivalent inter-word and intraword spaces. The possible combinations of a two-character word are a one-character word plus another one-character word, and a twocharacter word by itself. For example, the two-morpheme English word “watchstrap” requires correct segmentation of the letters “h” and “s” prior to the lexical decision (unless “watchstrap” is stored as a lexical item). The inter-character space in a Chinese word is the same for all the characters, whether or not they are bound to a morpheme. Thus, in the present study, when word length was increased to three characters, the number of possible segmentations increased to four, as shown in Table 5. The possible segmentations of a five-character word are shown in Table 6. The word may have one to five morphemes, each of which consists of one or more characters. Thus, there are 16 possible segmentations.

To perform an easy task, the two hemispheres may work independently. However, performing a more difficult task, such as recognizing five-character words, should require the two hemispheres to collaborate, resulting in a bilateral effect. This aspect of word recognition is different when reading Chinese than when reading English, as additional processing is needed to parse the Chinese characters into words.

Gestalt perception and redundancy gain

The bilateral effect appeared in the condition with the longest word length. A simple visual lexical decision task seems to benefit from bilateral presentation, in a way comparable to the single-character homophone and synonym-judgement tasks used by [8].

A possible interpretation of both sets of findings is that long words provide a greater number of identical units for comparison. Increasing redundancy enhances the Gestalt effect as compared with simple words with fewer units [18]. The five-character words used in the present study were composed 1 of a larger number of strokes than the two-character and three character words. Thus, the Gestalt effect increased with increasing word length, reaching its highest level with the five-character words.

As repeated items are presented simultaneously, they come to be seen as a unit or as having a connection, such as being part of the same object or having a common fate. This Gestalt effect of perception, based solely on the number of stimuli presented in the display, is confounded with the bilateral effect, raising the question: which is the best way to characterise the effect? To overcome the problem of presenting two stimuli at the same place and time, [21] presented two stimuli at the same place (the central fixation point) but asynchronously (SOA from 100 to 300 msec.). In another experiment in the series, they tested the ‘ignition priming’ of word recognition (SOA from 150 to 180 msec.) However, in neither experiment were they able to test the presentation of double stimuli at the fixation point with zero SOA.

Foveal splitting

Initial bilateral presentation: According to the model based on the split-fovea hypothesis discussed earlier, the bilateral projection in every bilateral trial (before viewers shift their eyes to the other stimulus) has consequences for the prolonged processing of long words. The results demonstrate that bilateral projection tends to help word recognition even though it only occurs at the very start of the presentation. For most of the trial, when the five-character words are fixated, the direct fixation is on only one of the two stimuli. Counterintuitively, this is the condition in which the bilateral effect was strongest.

Consider the first fixation, midway between the two identical stimuli. These two stimuli are visually registered along with the arrow indicating which hemifield is to be attended to. It is very doubtful that the longer words, or even any of their component characters, are recognized at this point. The next fixation and all subsequent fixations fall on the characters of the attended word. Consider now the processing of the characters towards the end of the fixated word, and, in particular, fixations on single characters. The left half of the character is projected to the RH and the right half to the LH. Normal recognition would involve the hemispheric integration of these two pieces of information. However, in the present experiment, the unattended word was still a potential source of information. If the fixated word is the stimulus on the left, then the left side of the relevant unattended character is parafoveally available to the LH and thus able to supply it with information complementary to that of the right half of the fixated word; that is, the LH has immediate visual information about the whole word without relying on callosal transfer.

Foveal splitting and parafoveal vision

Another possible explanation of this bilateral effect in addition to redundancy gain (discussed above), involves foveal splitting and parafoveal vision. According to the model based on the split-fovea hypothesis proposed in the beginning of the present study, the initial stage of presentation must accomplish more than the later stages and/ or have a facilitating effect. This interpretation means that the bilateral effect is prima facie evidence for foveal splitting, because (a) only if a word is divided into two halves at about the centre can bilateral presentation provide a complete image in one of the two hemispheres, and (b) only in the split-fovea model does the information projected to the hemispheres from bilateral presentation differentiate the result from that associated with unilateral presentation (see the second fixation of the bilateral presentation in Figure 2 for details). According to the model of Split fovea in Figures 1 to 4, if the fovea and the fixated words are not split from the centre (i.e. perception of the words is undifferentiated), then when the words are directly fixated (during the second fixation) each hemisphere has information about the complete image. According to the split-fovea hypothesis, in the bilateral presentation condition of the present experiment one of the hemispheres had a complementary image to that in the other hemisphere, which generalized the bilateral effect to the other presentation conditions. Unlike the split-fovea hypothesis, the non4 split fovea hypothesis predicts no difference between the unilateral and bilateral viewing conditions in the present study, because each hemisphere has the same amount of information.

We propose that the information captured in parafoveal vision played an important role in the bilateral advantage. In the bilateral condition, this initial “preview” was considered to be bilateral, but when the participants read the words and shifted their eyes to the other stimulus, which was not directly fixated, the information provided was in the parafovea. Given that the five-character words took the longest time to recognize, we propose that the final parts of the word attracted the participants’ fixation and the other word in the parafovea assisted recognition of the target word. Thus, we propose more broadly that parafoveal vision contributes to the bilateral effect in recognition tasks with long Chinese words.

Conclusion

There was a slight, insignificant tendency towards bilateral advantage when word-length was increased. This tendency indicates that only if the complexity of a task increases to a critical level will the two hemispheres collaborate to complete the task. The study addressed the issues of metacontrol, complexity, Gestalt perception, redundancy gain, and the fovea-split hypotheses. The results support the hemispheric collaboration hypothesis only when the tasks were heavily loaded. They are consistent with the findings of Mohr et al. [4] and Monaghan et al. [14], who reported that the left and right hemispheres collaborate rather than inhibit each other; in other words, they act independently when processing the same linguistic stimuli. Further studies are necessary to clarify whether bilateral advantage is or is not completely caused by hemispheric collaboration, because parafoveal vision, the Gestalt perception of repeatedly presented stimuli, or the sheer number of stimuli might contribute to the effect.

Acknowledgement

This work is partly based on an unpublished PhD thesis submitted by the first author YJC to the School of Informatics, University of Edinburgh. We thank all the participants for taking part in the studies. The authors declare that there is no conflict of interest regarding the publication of this article.

Conflicts of Interest (COI) Statement

The author has declared no conflict of interest.

References

Boles, D.B. (1995). Parameters of the bilateral effect. In FL Kitterle (Ed.), Hemispheric Communication- Mechanisms and Models, UK: Lawrence Erlbaurn.

Banich, M.T. (1995). Interhemispheric interaction: Mechanisms of Unified Processing. In FL Kitterle (Ed.), Hemispheric Communication- Mechanisms and Models, UK: Lawrence Erlbaurn.

Iacoboni, M., & Zaidel, E. (1996). Hemispheric Independence in word recognition: Evidence from unilateral and bilateral presentations. Brain and Language 53: 121–140.View

Mohr, B., Pulvermüller, F., & Zaidel, E. (1994). Lexical decision after left, right and bilateral presentation of function words, content words and non-words: evidence for interhemispheric interaction. Neuropsychologia 32: 105–124.View

Hellige, J.B., & Michimata, C. (1989). Visual laterality for letter comparison: effects of stimulus factors, response factors and metacontrol. Bulletin of Psychology 27: 441–444.View

Hellige, J.B., Taylor, A.K., & Eng, T.L. (1989). Interhemispheric interaction when both hemispheres have access to the same stimulus information. J Exp Psychol Human Percept Perf 15: 711–722.View

Bookheimer, S. (2001). How the brain reads Chinese characters. Neuroreport, 12, A1.View

Zhang, W.T., & Feng, L. (1998). The recognition of three attributes of Chinese words and the interhemispheric interaction. Acta Psychologica Sinica 30: 129–135.

Zhang, W.T., & Feng, L. (1999). Interhemispheric interaction affected by identification of Chinese characters. Brain and Cognition 39: 93–99.

Mechelli, A., Humphreys, G.W., Mayall, K., Olson, A., Price, C.J. et al. (2000). Differential effects of word length and visual contrast in the fusiform and lingual gyri during reading. Proceeding of Roral Society, London 267: 909–1913. View

Banich, M.T. (1998). The missing link: The role of interhemispheric interaction in attentional processing. Brain and Cognition 36: 128–157.View

Banich, M.T., & Belger, A. (1990). Interhemispheric interaction: how do the hemispheres divide and conquer a task. Cortex 26: 79–94.View

Weissman, D.H., & Banich, M.T. (1999). Global-local interference modulated by communication between the hemispheres. J Exp Psychol Gen 128: 283–308.View

Monaghan, P., & Pollmann, S. (2003). Division of labour between the hemispheres for complex but not simple tasks: An implemented connectionist model. J Exp Psychol Gen 132: 379–399.View

Zhang, W.T., & Feng, L. (1992). A study on the unit of processing in recognition of Chinese characters. Acta Psychologica Sinica 24: 379–385.

Chen, Y.P., Allport, D.A., & Marshall, J.C. (1996). What are the functional orthographic units in Chinese word recognition: the stroke or the stroke pattern? Q J Exp Psychol Section A–Human experimental psychology 49: 1024–1043.View

Pelli, D.G., Burns, C.W., Farell, B., & Moore-Page, D.C. (2006). Feature detection and letter identification. Vision Research 46: 4646– 4674.View

Pelli, D.G., Farell, B., & Moore, D.C. (2003). The remarkable inefficiency of word recognition Nature 423: 752–756.View

Cohen, L., Dehaene, S., Naccache, L., Lehéricy, S., & Dehaene- Lambertz, G. (2000) The visual word form area: spatial and temporal characterization of an initial stage of reading in normal subjects and posterior split-brain patients Brain 123: 291–307. View

Koffka, K. (1935). Principles of Gestalt psychology. New York: International Library of Psychology, Philosophy and Scientific Method.View

Mohr, B., & Pulvermüller, F. (2002). Redundacny gains and costs in cognitive processing: effects of short stimulus onset asynchronies. Journal of Experimental Psychology: Learning, Memory, and Cognition, 28: 1200–1223.View

Pulvermüller, F. (1999). Words in the brain's language. Behav Brain Sci 22: 253–279. View

Anstis, S. (1974). A chart demonstrating variations in acuity with retinal position. Vision Research 14: 589–592.View